ElevenLabs UI seeks to simplify that with an open source collection of React components for voice experiences, conversational agents, and real-time audio. It’s based on shadcn/ui, so the components integrate into your own code and can be modified freely.

The proposal is practical: instead of depending on a closed library, you incorporate reusable blocks into your project. For teams working with React, Next.js and Tailwind, adoption feels natural. It doesn’t require re-learning half of frontend to add a functional microphone.

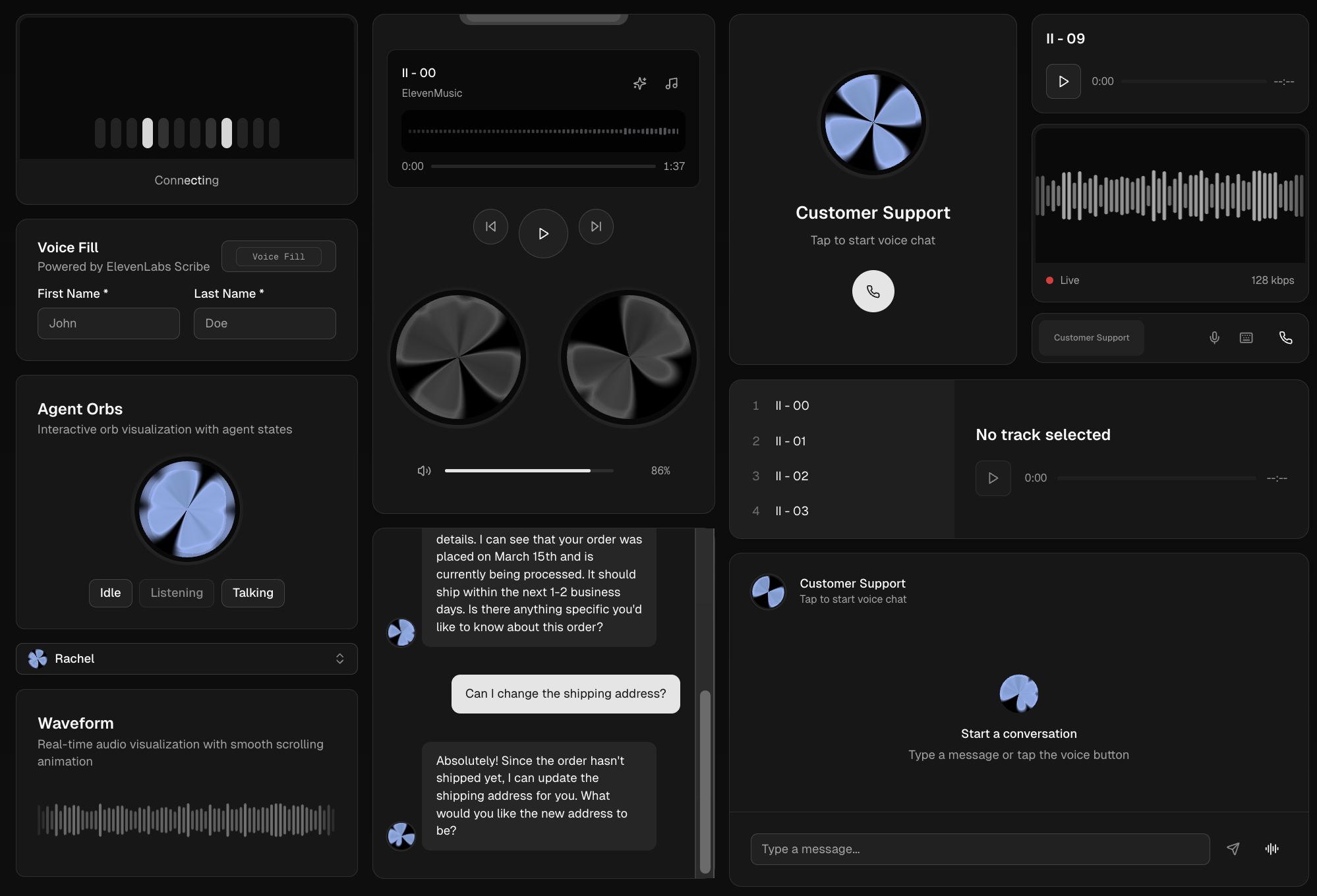

Among the available components are Conversation Bar for voice chats with WebRTC, Speech Input for transcription, Voice Picker for selecting voices, Waveform for audio visualization, and messaging elements for conversational interfaces. In other words, it covers the tedious part of building multimodal products.

For developers, the real value lies in use cases: automated support, internal copilots, hands-free assistants, accessibility tools, conversational onboarding, or voice commerce. Instead of investing weeks solving audio UX and synchronization, you can focus on business logic.

It also makes clear ElevenLabs’ ambition: moving from a voice provider to a complete platform for conversational products. That brings integration advantages, although it’s advisable to maintain a decoupled architecture so as not to get too tied to a single provider.

In summary, ElevenLabs UI doesn’t promise to change the world. It just saves time for those who need to launch voice experiences without reinventing every button. And that’s already quite rare.